| May – November, 2019 |

This project is a game I developed in Animal Logic Academy for the UTS Data Arena. I was one of the lead game developers and programmers for the game, working closely with layout, art and effects artists in order to get this monumental game made.

The Initial Pitch (Unity Prototype)

For the initial pitch we had eight weeks to come up with a ten minute pitch with demonstrations and footage to back up our idea. I initially was put on the team of pitching the idea of a ‘game in the data arena where you had to balance to survive against an enemy in VR’. The initial idea used a pirate ship being attacked by a Kraken, however we were advised to stray away from this to try and make something more unique. This is what we came up with:

Subaqua is an interactive and cinematic experience for the UTS Data Arena, where the audience must work together as a team, to control how the experience unfolds. A group of 7-8 players enter the data arena and function as a group of explorers, who travel through their space submarine in search for a portal deep underwater that will allow them to escape this dangerous planet. By moving left and right in the space, they control the direction the ship travels. However, a giant alien sea monster, controlled by a player in VR, must try to follow and destroy the ship as it invades its territory.

For anyone unaware what the Data Arena is (since it is a very niche piece of technology), it is a cylindrical space at the University of Technology Sydney running a Linux Environment equipped with motion tracking where you can see projections on the wall.

Multiple people can enter the space and have a shared virtual experience, however it has not been optimized for ease-of-access. Most experiences in the area use video, however there have been very limited uses of Unity in the past and at the time, our final project was the first time anyone had been able to get Unreal Engine working in the space.

Ontop of this, being only able to test in the room for one hour every week made working with this area extremely challenging.

We decided to try and go for an underwater theme for the game made sure the balance mechanic made logical sense (as balancing a submarine to turn it left and right) as well as it harnessed the field of view of the data arena (it does have 360° horizontal view but only 45° vertical view, so it meant that any players couldn’t see directly above or below them).

As I was the only programmer in our pitch group it was my responsibility to ensure the tech was feasible. This of course meant that I felt that a prototype was needed and I begun the process of making a prototype in Unity and using Git for source control. The main difficulty of the prototype was working with two independent groups of third party hardware, Virtual Reality headsets and the Data Arena.

As both these spaces were immersive, getting motion sick was our main concern. In the tests I did, it did seem that people who were susceptible to motion sickness were affected by both areas, however the more predictable the movement did seem to be the major factor. Another element that added upon this was that if something large, such as a long wall, moved close to the center of the Data Arena (where the camera’s were rendering from) the object would distort heavily on the screen which exacerbated the feeling of motion sickness.

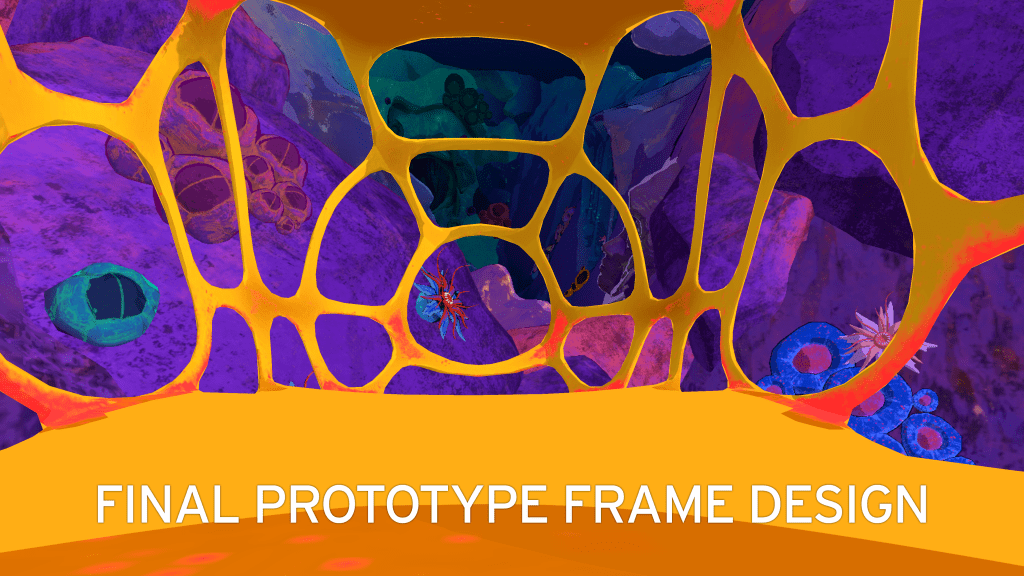

With this in mind I was able to test and get a decent prototype which allowed us to test the speed on which the data arena should move and we were able to get a experience that limited motion sickness. Another trick we used was to put a ship frame around the data arena cameras. Having something that was tied to the position of the player in the data arena helped people feel less motion sick as well. We also used the frame’s design to lead the eye of the player forward to the front of the data arena, where most people found to be the area where the least motion sickness occurred.

Similarly, for the VR player, finding a intuitive way to move that wouldn’t break their immersion or cause motion sickness was also important. After testing a few different methods, including brainstorming how they would be implemented from an animation point of view, we arrived at moving where the player’s hands were pointing, which would allow them to feel like they were ‘superman’ flying through the ocean. This allowed the player to look around the space without changing the direction they were going, and also allow them to attack with their other hand, even when they were moving.

We also had to explore the tracking technology the data arena provided. In our tests we found it was very accurate when the trackers were visible, however the origin was around 2.5 meters off and we had to add a 30° offset later down the road to ensure that the front of the ship wasn’t directly on a seam in the data arena. For this version of the prototype we decided to put trackers on beanies, as they would be quite high up and visible, and yet unobtrusive, however we did realise we would need to change them for the final game as sharing beanies between different players could provide health risks such as lice.

Through this experience, I also had some time to play around a bit in Houdini, particularly with Height Field Terrains, destructible mesh and a bit of level generation. To make level design simpler, we decided to go with simple Height Field valleys for the underwater world, as this was easier to automate asset generation, and would allow for some user controlled precision. For this I also made a above water mountain for the world, to function as a way to direct the player’s eye forward early in the experience.

Also for one of the areas later in the map, I made a way to randomly generate pillars in the space, which guarantee there was a way for the ship to proceed through it safely. This was the beginning of me looking into changing the difficulty of the experience depending on the players wants, however due to time constraints and changing engine, I was unable to implement this into the final build.

However, I did manage to get destructible mesh working for this project, as something that the monster could attack or the ship could collide with and this element did make it to the final project.

After eight weeks of prototyping we successfully pitched the project against four other prototypes, and it was selected to continue development in the upcoming months.

Final Game (Unreal Engine without VR)

Once our project was selected we were given the option to decide what roles we wanted to have in the upcoming development. As I wanted to do more asset development I requested that I work on modelling, however I also understood that our cohort was severely understaffed with programmers and I was the only game developer, so I also said that I’d be happy to consult for the project.

Beginning the project I was one of the people suggested that we move our the project over to Unreal Engine, as it was a much more user-friendly engine for more visual people (which a large amount of our cohort was). We also decided to use Perforce for version control as it was easy for everyone to use, while still providing good version control. However after being out of the project for a bit due to having to hop back onto the other project, the Bounty Hunter and being out of office for two weeks due to family matters, I learned that the VR element of the game had been scrapped due to lack of programmers and there was problems occurring from the gameplay and asset side.

Due to this, I decided that to make the development of the game successful, I would need to hop on the project as developer and provide some assistance, even though it would mean I would be unable to do the asset work that I wanted to explore. The main issue I identified was difficulty communicating between the artists and the programmers, which became a bigger problem when the lead art director and modeller left the cohort unannounced in the middle of development.

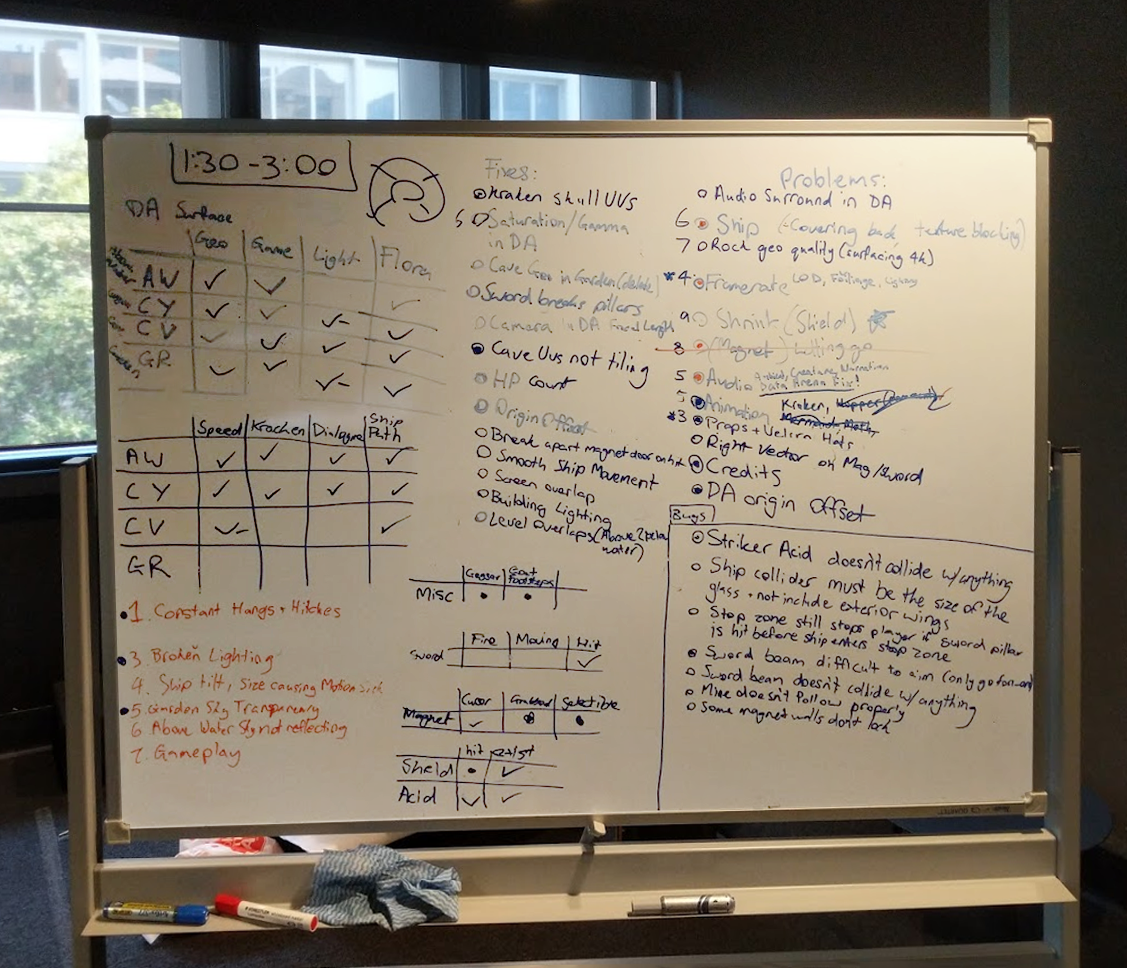

Because this, and technical issues beginning to surface with the data arena, I decided to implement some Scrum methodologies which I had learned in my game development bachelor, to try and redirect the project. I begun by running morning Scrum Meetings to ensure development was running smoothly and writing down user stories for all the features that needed to be implemented and went through them with the core stakeholders to try and rank their importance.

After a while of doing this, I found that it helped break down some barriers in communication that some people had before, and helped move the development forward at a steady pace, where everyone was working on something constantly.

One of the whiteboards we used to track our progress

Throughout the entire development of this project I was assisting with trying to brainstorm feasible gameplay mechanics with the other game designer. We had planned four main forms of player interaction in the experience which were controlled by physical interactions in the real world:

- The position of the players controls the ship tilting, which controls how far left and right they go. To do this we put a positional tracker on some mechanic goggles, which the players could wear on their head (similarly to how the beanies functioned in the first prototype).

- A shield prop, which when moved to the edge of the screen would create a shield to appear which could protect the ship from acid projectiles which native creatures will fire at the ship. To do this we had a tracker on the top of a foam shield prop, and when it went away from the center of the room, we calculated the angle and would lerp a shield in-game there.

- A sword prop, which when swung would fire a beam in the direction of the swing. This was used to destroy rocky pillars or hit things at a distance. To do this we had a foam sword prop with a positional/rotational tracker on the blade which we would calculate the angle and speed of the swing with.

- A magnet prop, which would create a pulsing cursor on the screen where the magnet was pointing. When the magnet pointed at a metal object, such as a door or a mine, the magnet would attach to it and allow the player to move it out of the way of the ship, or into something else. To do this we had a foam magnet prop with a positional tracker on it. We would perform a ray cast depending on where we calculated the tracker to be and if it interacted with an asset that was tagged as ‘magnetic’ it would grab it. Depending on the asset it would do different things. If it grabbed a door, the player could move it left or right along a spline, and when it reached the end of it’s spline or if the player moved the cursor too far away from the door, it would detach. If it grabbed a mine, it would find the closet point on an invisible curved spline mesh and lerp the mine to that. This would cause the mine to follow an invisible path and ensure it didn’t get stuck in areas it wasn’t intended to go. If the cursor went out of the invisible path, the mine would detach, and if the mine hit another asset, it would explode and destroy any destructible assets in that area.

As these mechanics were being workshopped within the data arena and the data arena had many problems with tracking that only began occurring towards the end of the year it was difficult to get playtesters in. However, as a game developer, I knew the importance of getting people in to test the game so I tried to go out of my way to get people from the office into the experience in the day and program for the project after work hours instead. This provided some valuable insight into the players’ reaction to parts in the game and, with some overtime, allowed us to make the game more user-friendly.

Also, as the main artistic directors had left the project, I had to assist with managing the asset development and planning the player experience. As I had a lot of experience with surfacing and texturing from my work on the ‘Bounty Hunter’, I assisted with the lead surfacer to make sure the assets would fit together in the level and advise if any assets that had been modeled didn’t appear in the game as of yet and if and where they should appear. As texturing ended up being finished I also helped advise the effects creation, as we needed some effects to make the game feel more impactful and appealing.

An example of something I did here was work with both surfacers and effects artists to make ‘intractable’ objects such as doors, destructible pillars and mines more visible for the players. I did this by making the three main tools be colour coded: Sword-Red, Magnet-Gold, Shield-Teal. These were all colours that we had not used anywhere else in the game so they stood out. As such, to make it clear which assets could be interacted with whatever tool, we made the assets which the tool could interact with glow the same colour as the tool.

We were also aware of how these colours could be perceived similar to certain colour-blind people. As we were unable to change the colours (due to all other assets already being surfaced) we tried to push the contrast between each of these colours, as well as having unique particles and materials for each interatable asset to exacerbate this difference.

During this project we were also working with some sound students, out of cohort. As we had planned the game to have some form of narration helping ease the players into the experience we needed that, as well as some sound effects and music for the experience. Using my knowledge with working along sound students for ‘Paradise GATES Farm’, I helped them know what specific audio they needed to make and implemented it into the game programmatically.

When development on this project was complete we had a showcase night where we allowed groups of 12-15 people into the data arena to experience the prototype. After asking several people about their experiences nobody complained about feeling motion sick, which was very good, and many people enjoyed the experience thoroughly.

Even though this was the case, I still feel like there were many things that could have used improvement, and plenty of things that could have been tested further, I am still happy that I was able to undertake working on this project. This was due to the fact that it was the most challenging game I have to work on so up to this year and completing it to a standard where strangers in the animation and games industry enjoyed it shows a testament to how much effort me and my cohort were able to put into it, despite its challenges.